Geethu Miriam Jacob and Sukhendu Das

Visualization and Perception Lab

Department of Computer Science and Engineering, Indian Institute of Technology, Madras, India

Accepted in Image and Vision Computing (IMAVIS-2017), Elsevier

Abstract

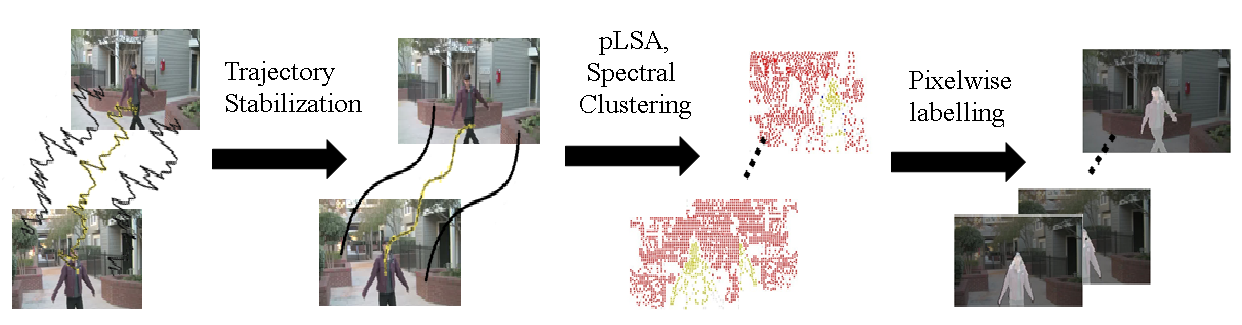

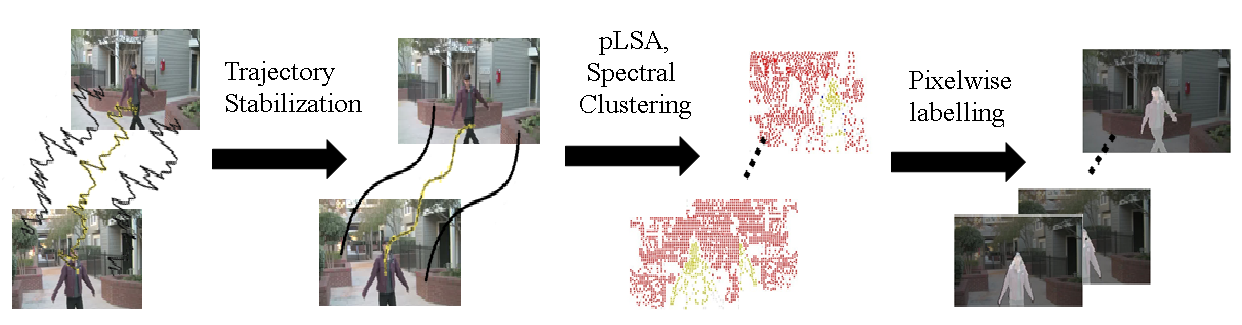

Moving object segmentation in videos has always been a challenging task in the presence of large camera movements. Moreover when the camera motion is jittery, most of the existing motion segmentation approaches fail. In this work, we propose an optimization framework for the segmentation of the prominent moving object in jittery videos. A novel Optical Trajectory Descriptor Matrix (OTDM) built on point trajectories has been proposed for this purpose. An optimization function has been formulated for stabilizing the trajectories, followed by spectral clustering of the proposed latent trajectories. Latent trajectories are obtained by performing Probabilistic Latent Semantic Analysis (pLSA) on the OTDM (factorization of OTDM using KL divergence). This integrated framework yields accurate clustering of the trajectories from jittery videos. Foreground pixel labelling is obtained by utilizing the clustered trajectory coordinates for modelling the foreground and background, using a GraphCut based energy formulation. Experiments were performed on 16 real-world jittery videos. Also, the results have been generated for a standard segmentation dataset, SegTrackv2, with synthetic jitter incorporated. Jitter extracted from a real video is inserted into stable SegTrackv2 videos for analysis of performance. The proposed method, when compared to the state-of-the-art methods, was found to be superior.

Flowchart of the Algorithm

Segmentation Results on Jittery Videos

Downloadable Files

More Visual Segmentation Results. Click the links below to download the comparative results of each video shot

| Video | Zhang et al. [1] (CVPR'13) |

Papazoglou et al. [2] (ICCV'13) |

Ochs et al. [3] (PAMI'14) |

Proposed | Performance ratio |

| Walk1 | 0.401 | 0.135 | 0.02 | 0.714 | 1.78 |

| Walk2 | 0.009 | 0.123 | 0.001 | 0.839 | 6.82 |

| Cheery_Girl | 0.144 | 0.201 | 0.09 | 0.801 | 3.99 |

| Doll | 0.139 | 0.926 | 0.819 | 0.928 | 1.002 |

| Baby | 0.116 | 0.671 | 0.007 | 0.693 | 1.03 |

| Skating | 0.033 | 0.248 | 0.318 | 0.716 | 2.25 |

| Car | 0.029 | 0.06 | 0.058 | 0.392 | 6.5 |

| Cycling1 | 0.558 | 0.359 | 0.610 | 0.598 | 0.98 |

| Cycling2 | 0.654 | 0.649 | 0.462 | 0.663 | 1.01 |

| Climb | 0.54 | 0.764 | 0.03 | 0.81 | 1.06 |

| Drone1 | 0.715 | 0.755 | 0.658 | 0.769 | 1.01 |

| Drone2 | 0.487 | 0.436 | 0.549 | 0.592 | 1.07 |

| Train | 0.211 | 0.37 | 0.535 | 0.857 | 1.6 |

| Cycling3 | 0.701 | 0.342 | 0.605 | 0.732 | 1.04 |

| Staircase1 | 0.726 | 0.296 | 0.713 | 0.819 | 1.12 |

| Staircase2 | 0.875 | 0.889 | 0.801 | 0.934 | 1.05 |

| Average | 0.396 | 0.452 | 0.392 | 0.741 | 1.95 |

Performance ratio = Proposed⁄max(CVPR'13,ICCV'13,PAMI'14)

| Video | Zhang et al. [1] |

Papazoglou et al. [2] |

Ochs et al. [3] |

Proposed |

| JT1 | 0.284 | 0.647 | 0.587 | 0.667 |

| JT2 | 0.315 | 0.65 | 0.432 | 0.671 |

| JT3 | 0.309 | 0.651 | 0.424 | 0.644 |

| JT4 | 0.264 | 0.679 | 0.42 | 0.70 |

| Video | Zhang et al. [1] |

Papazoglou et al. [2] |

Ochs et al. [3] |

Proposed |

| JRL | 0.586 | 0.637 | 0.327 | 0.665 |

| JRM | 0.551 | 0.575 | 0.525 | 0.60 |

| JRH | 0.543 | 0.585 | 0.479 | 0.65 |

References

[1] D. Zhang, O. Javed, and M. Shah, “Video object segmentation through spatially accurate and temporally dense extraction of primary object regions,” in CVPR, 2013 [2] A. Papazoglou and V. Ferrari, “Fast object segmentation in unconstrained video,” in ICCV, 2013. [3] P. Ochs, J. Malik, and T. Brox, “Segmentation of moving objects by long term video analysis,” IEEE TPAMI , vol. 36, no. 6, pp. 1187–1200, 2014.